Introduction

Artificial Intelligence (AI) has advanced rapidly in handling diverse data like text, images, videos, and audio. But true intelligence needs more than just perception — it needs reasoning, thinking, and planning.

Large Multimodal Reasoning Models (LMRMs) aim to bridge that gap by combining:

- Perception (seeing, listening),

- Reasoning (logic and relations),

- Thinking & Planning (multi-step decision-making),

- and Execution (responding or acting).

Why Do We Need This Survey?

Modern models like GPT-4V and Gemini can handle images, text, and speech together. But we still need answers to questions like:

- How do these models actually “think”?

- Can they reason reliably and safely in the real world?

Key Reasons:

- Growing Complexity: Models now process multiple input types — making reasoning much harder.

- Better Decision-Making: LMRMs improve logic and planning across modalities.

- Research Gap: We need a structured overview of recent advances in multimodal reasoning.

What Are Large Multimodal Reasoning Models (LMRMs)?

LMRMs are AI systems designed to:

- Perceive: Understand inputs like images, videos, speech, and text.

- Reason: Link ideas, understand context, and make logical inferences

- Think & Plan: Break down tasks and prepare step-by-step strategies.

- Execute: Act or respond across formats (text, image, robot action).

Examples:

- GPT-4V – Processes both text and images.

- Flamingo – Vision + language reasoning (DeepMind).

- Gato – Unified model for robotics, images, and control tasks.

How Do LMRMs Work?

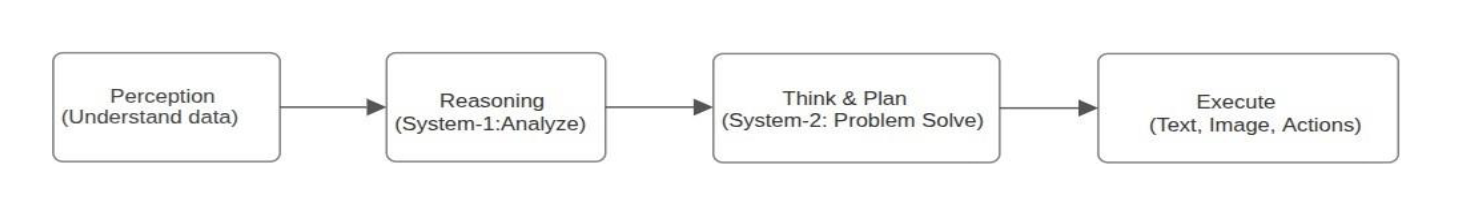

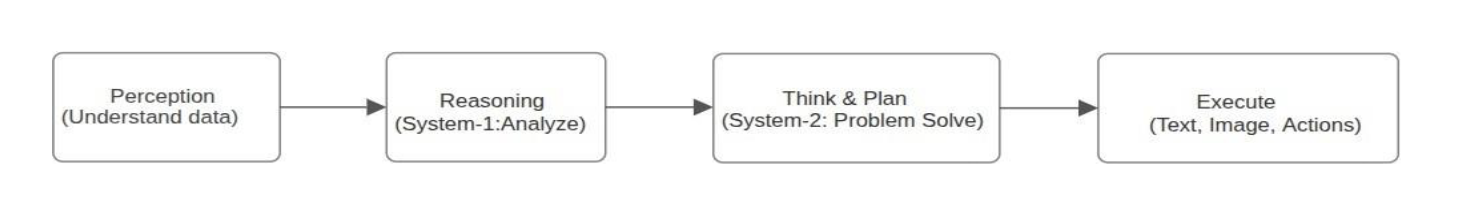

Let’s understand how Large Multimodal Reasoning Models (LMRMs) work using a 4-stage modular reasoning architecture:

Stage 1: Perception – Perception-Driven Modular Reasoning

This stage handles input like images, videos, text, or audio and transforms them into embeddings that machines can understand. It uses tools like Vision Encoders (CLIP, ViT) and Text Encoders (BERT, T5), with model types such as Modular Reasoning Networks and Vision-Language Models (VLMs). The main goal is to convert real-world data into a shared machine-friendly representation.

Stage 2: Reason – Language-Centric Short Reasoning (System-1)

This is a fast, intuitive reasoning stage that operates using text logic. It helps in answering simple queries or identifying objects. Techniques include Relational Reasoning, Procedural Reasoning, Tool Use, and Visual Experts. Models like MiniGPT-4, Kosmos, and LLaVA are examples. The goal here is to make quick, shallow decisions efficiently.

Stage 3: Think & Plan – Language-Centric Deep Reasoning (System-2)

This stage performs slow, deliberate reasoning for complex problems. It's used in multi-step planning and decision-making. It leverages Chain-of-Thought Prompting, Tool-Augmented Planning, and Cross-Modal Reasoning. Example models include MM-ReAct, CoT-GPT, and Reason+ReAct. The aim is to allow the model to deeply understand and plan before acting.

Stage 4: Execute – Native Multimodal Reasoning

This final stage generates outputs like text, images, or real-world actions based on all prior stages. It powers applications such as robot movement, GUI interaction, or autonomous driving. Real-world agents include Embodied AI, GUI Agents, and self-driving cars. Models like GPT-4V, Gato, Qwen-VL, and Gemini 1.5 Pro are used. The goal is to deliver an intelligent multimodal response or action.

Flowcharts:

Real-World Applications

- Medical Diagnosis – Combine reports, scans, and patient history.

- Robotics – Perceive and act in physical environments.

- Education – Teach concepts using video, images, and text.

- Customer Support – Understand voice, screenshots, and queries.

- Scientific Research – Analyze charts, graphs, papers together.

Future Improvements

- Long-Term Memory – Store and recall past experiences like humans.

- Better Planning – Handle long-term, multi-step tasks.

- Real-Time Actions – Improve speed and timing in live settings.

- Multimodal Datasets – Train with more diverse, real-world data.

- Safe Reasoning – Reduce hallucinations and biases.

Key Benefits of LMRMs

- Smarter Agents – Understand and act on multiple data types.

- Better Insights – Combine visual + text + sound for deeper understanding.

- Real-Time Use – Useful in robotics, healthcare, finance, etc.

- More Human – Like Reasoning – Closer to how people think.

Final Thoughts

This survey presents a full roadmap for building AI systems that can perceive, reason, think, and act ,just like humans.

- See, hear, and read the world (Perception)

- Reason quickly and deeply (System-1 & System-2)

- Create structured plans (Planning)

- Act in real-time (Execution)

As research continues, LMRMs will be at the core of building trustworthy, multimodal, intelligent agents that work side-by-side with humans in the real world.