A new framework lets AI agents teach themselves to become expert web searchers, smashing state-of-the-art benchmarks without any human-annotated data.

The world is captivated by Large Language Models (LLMs), the AI brains that power services like ChatGPT. While impressive, their true potential is unlocked when they can interact with the real world using tools like a web browser to find answers to complex, up-to-the-minute questions. Why? To move beyond their stored knowledge and provide truly dynamic, accurate, and deeply researched answers.

But there's a catch. Teaching an AI to navigate the web effectively is a monumental challenge. Current methods are caught between a rock and a hard place: they either require incredibly expensive, human-created datasets of perfect search examples or they use trial-and-error learning that often gets stuck and fails to learn the most effective strategies.

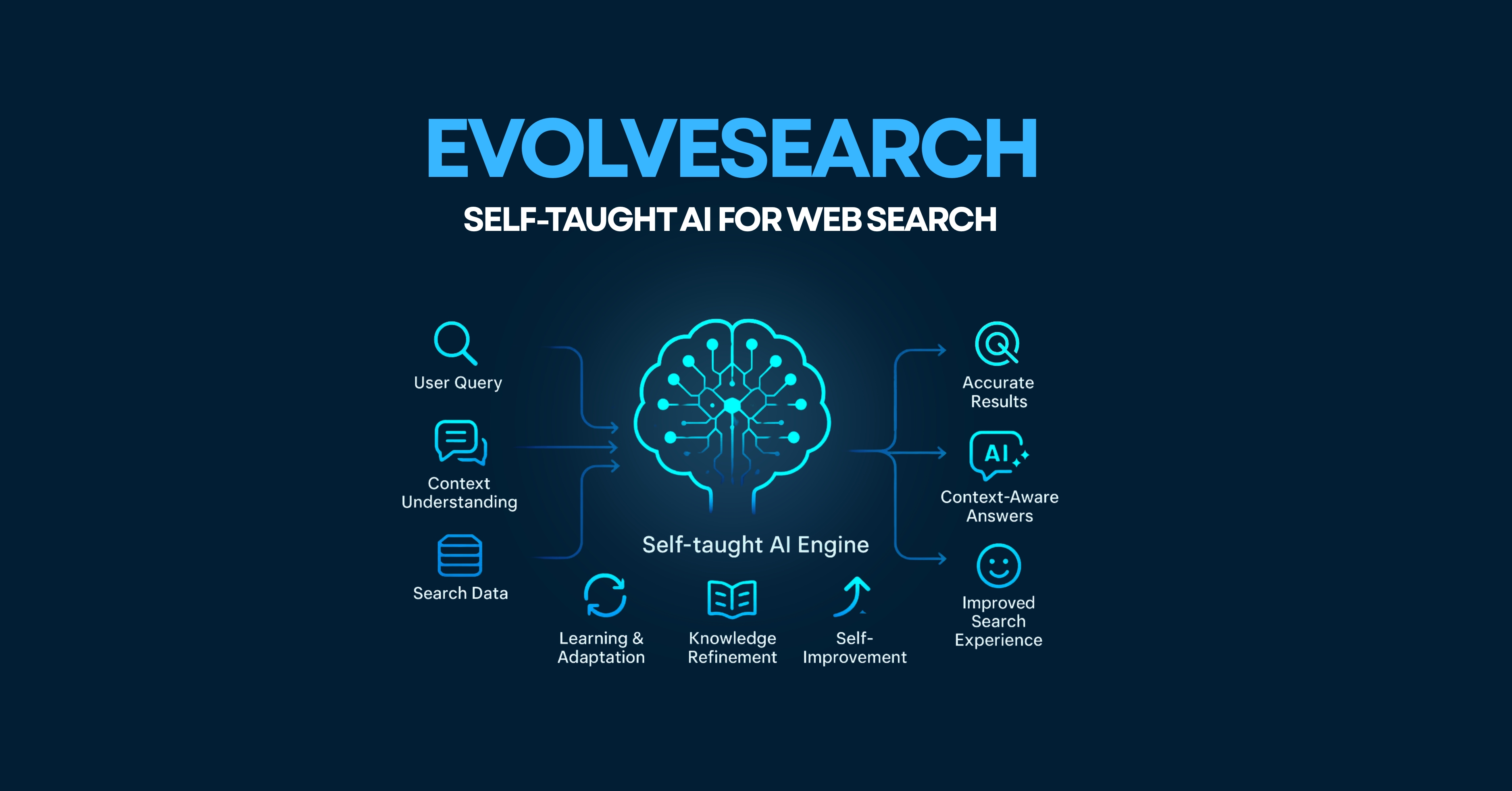

Enter EvolveSearch, a groundbreaking framework designed to create a self-taught web-browsing expert. Its like giving an AI a map, a compass, and the ability to learn from its journeys, getting progressively smarter with every trip.

What is EvolveSearch?

At its core, EvolveSearch is an iterative self-evolution framework that synergistically combines two powerful machine learning techniques: Reinforcement Learning (RL) and Supervised Fine-Tuning (SFT). Its specifically built to enhance the web search capabilities of LLM-based agents.

The goal is simple but ambitious: to create a search agent that consistently improves its performance over time by learning from its own experience, completely eliminating the need for external, human-annotated reasoning data.

How Does EvolveSearch Work Its Magic?

EvolveSearch isn't a single trick; it's a clever, repeating two-stage cycle that bootstraps the AI's own performance.

Stage 1: Exploration and Discovery (Reinforcement Learning)

First, the agent is let loose in a web search environment. For a given question, it generates a "rollout", a sequence of thoughts and actions to find the answer. It receives a reward for both following the correct thinking-and-acting format and for finding the right answer. This phase is all about exploration, allowing the model to try out different search strategies, make mistakes, and discover what works.

Stage 2: Refining the Strategy (Rejection Sampling Fine-Tuning)

This is where the "self-teaching" happens. EvolveSearch doesn't learn from every attempt. Instead, it acts as its own strict teacher by filtering the rollouts from the RL phase using three key rules:

High-Reward Selection: Only keeps the attempts that resulted in a high score (i.e., found the correct answer).

Same Query Deduplication: For multiple attempts on the same question, it keeps the one that used its tools the most, encouraging more diverse and thorough search paths.

Multi-Calls Selection: It prioritizes rollouts that involve multiple search calls, as these represent more complex and meaningful reasoning.

This curated dataset of "best attempts" is then used to fine-tune the agent. This process, called Rejection Sampling Fine-Tuning (RSFT), creates a much stronger, more robust "cold-start" model for the next cycle of RL exploration. This loop repeats, with the agent getting progressively better each time.

Also Read Our Blog: DeepCritic: Deliberate Critique with Large Language Models

Show Me the Numbers!" (Performance Highlights)

EvolveSearch doesn't just sound good in theory; it delivers stunning real-world results. The paper demonstrates its performance across seven challenging multi-hop question-answering benchmarks.

After just three iterations, EvolveSearch achieves a final average in-domain score of 76.2% and an out-of-domain score of 53.7%, outperforming all other baseline methods.

This represents an average improvement of 4.7% over the previous state-of-the-art across all seven benchmarks.

The progress is clear and consistent, with both the RL model and the SFT model getting smarter with each iteration.

Why is EvolveSearch a Big Deal?

No Human Data Needed: It completely removes the costly bottleneck of creating human-annotated reasoning datasets, making it vastly more scalable.

True Self-Improvement: It creates a virtuous cycle where the AI agent genuinely learns from its successes to bootstrap its own performance.

State-of-the-Art Performance: It has demonstrably surpassed previous top methods on a wide range of complex, multi-step search tasks.

Better Generalization: The agent learns underlying reasoning skills that can be applied broadly, not just to the specific types of data it was trained on.

What's Next for EvolveSearch?

The journey is far from over. The team behind EvolveSearch plans to:

Design a more efficient "streaming" system for filtering rollouts to reduce the computational cost of the training process.

Expand the agent's toolkit beyond web search to include other capabilities.

Investigate its performance on an even wider array of agentic tasks.

Conclusion

EvolveSearch represents a significant leap forward in creating autonomous AI agents. By synergistically combining reinforcement learning and supervised fine-tuning in a self-correcting loop, it pushes the boundaries of what's possible in automated information seeking. This technology is a key enabler for a future where powerful, personalized AI is not just a passive repository of knowledge, but an active, learning partner in our quest for information.