As Large Language Models (LLMs) grow by leaps and bounds, older paradigms of search have been appearing obsolete. For ages, researchers have taken complete advantage of Google, churning out billions of queries daily; now AIs have become the new search tool for information-gaining. According to recent surveys, 31% of Gen Z and Millennials have lately been probing on AI platforms and chatbots, suggesting an entirely new way of information retrieval in existence.

These paradigms of search-from old keyword-based engines to basic LLM chatbots-soft sell when research is heavy-weight, requiring deep-level analysis, and multi-step synthesis. Whereas the simpler retrieval paradigm does not work for nuanced queries, standalone LLMs grapple with the cutoff of knowledge or hallucination. And here comes the Agentic Deep Research as a groundbreaking solution.

What is Agentic Deep Research?

Agentic Deep Research represents an older terminological and paradigmatic shift in information retrieval technology. Whereas conventional engines are designed to return mere lists of links and chatbot applications are often fobbed off with a fixed answer short of explanation, these are systems with autonomous reasoning agents capable of planning, executing, and adapting their search strategy on the tooth of incidence. These are not mere information retrieval systems, but rather Agents that conduct full-scale research as fully competent human researchers would if not being able to do the job faster since they can go through enormous amounts of information in a matter of minutes rather than hours or days.

“Agentic” implies the system's autonomous decision-making on what to search for, how to refine its queries based on intermediate results, and when to change the direction of its research. In a nutshell, Agentic Deep Research goes beyond just using advanced LLM-based reasoning to basically conducting multi-step research tasks via an autonomous agent- style system.

The key components that make Agentic Deep Research possible include:

- Multi-step reasoning capabilities that allow the system to build upon previous findings

- Dynamic query generation that adapts based on discovered information.

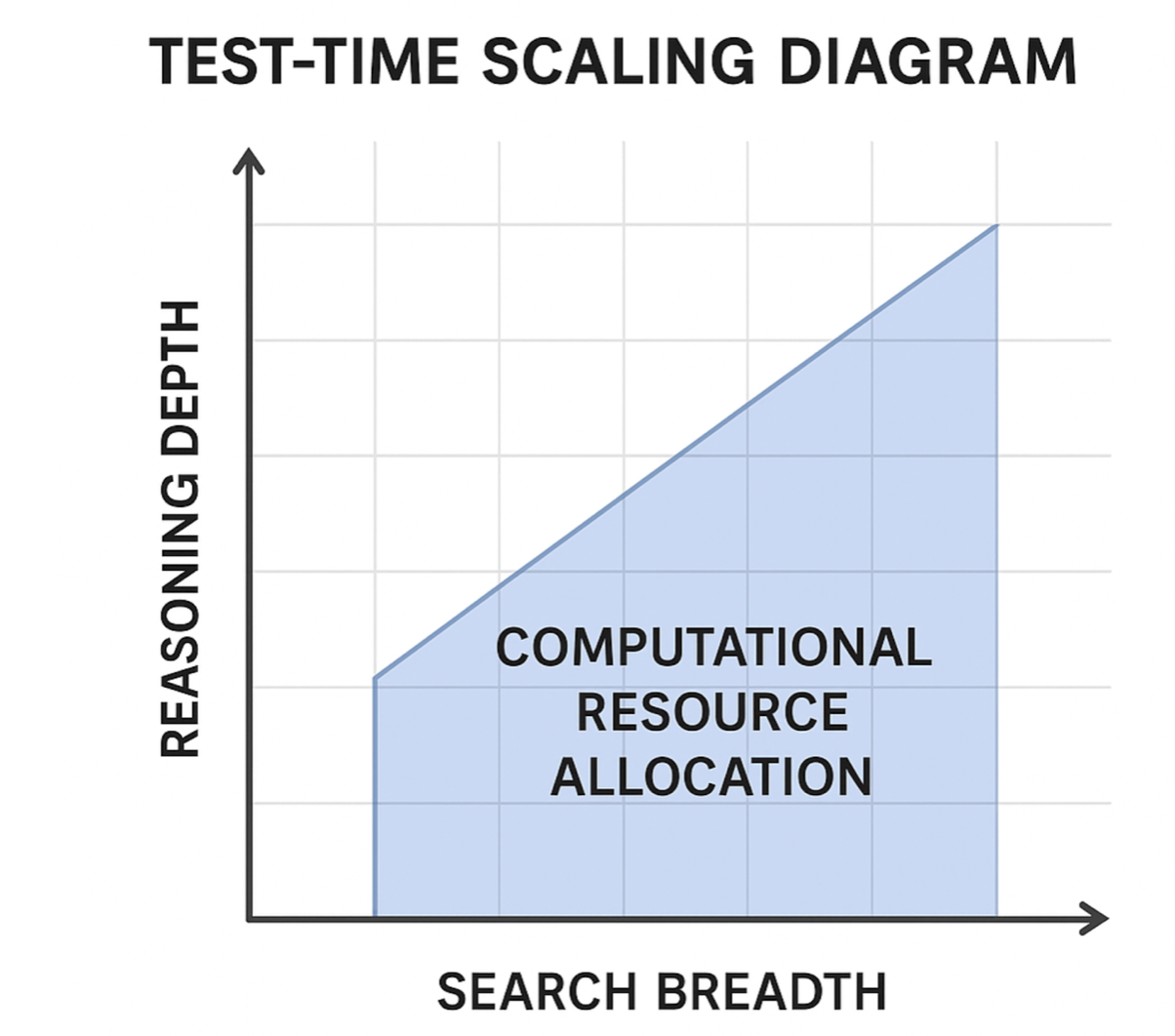

- Test-time scaling that allocates computational resources to improve answer quality

- Autonomous planning that determines the optimal research path

- Information synthesis that combines findings from multiple sources into coherent insights.

How Does Agentic Deep Research Work?

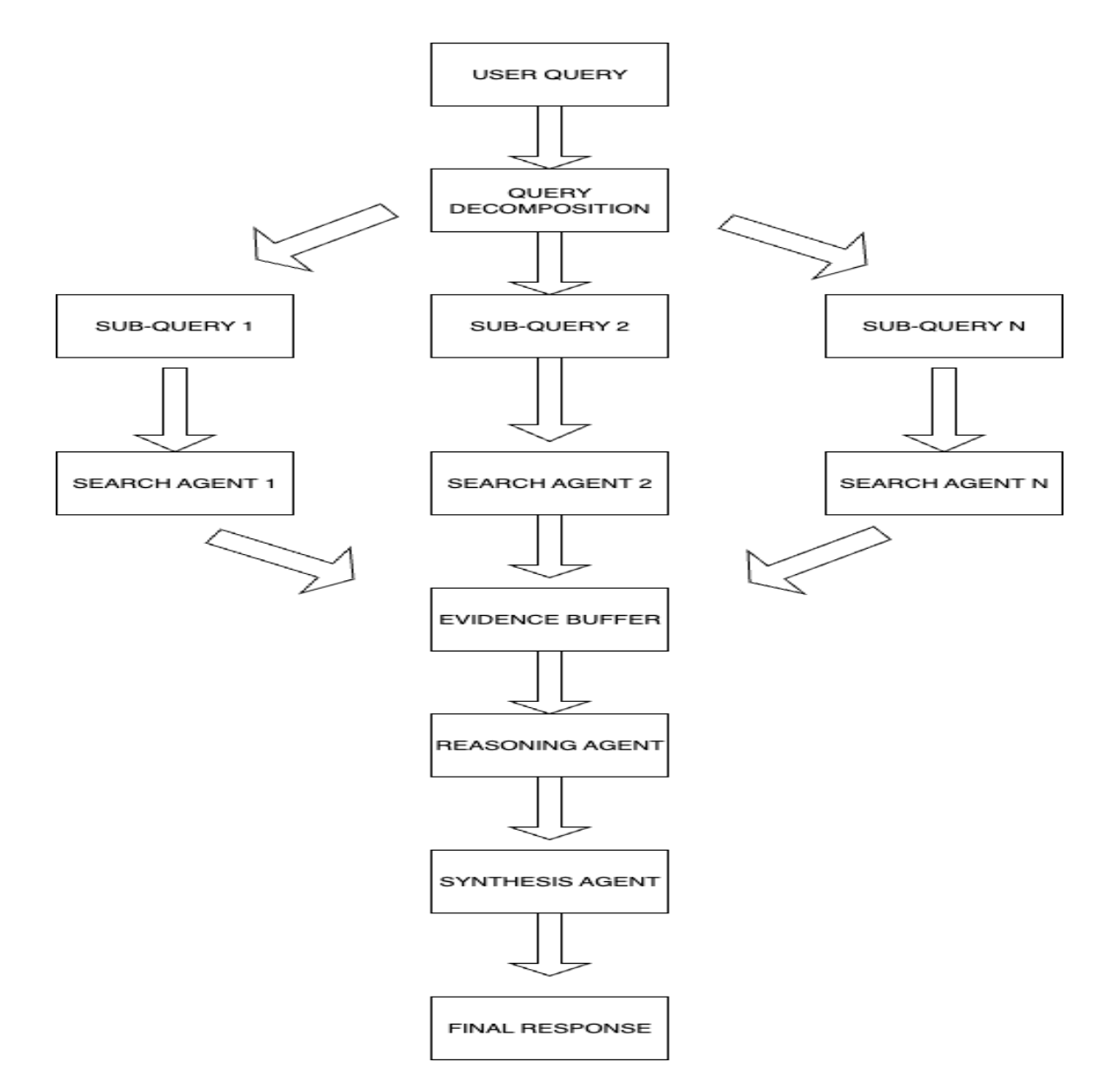

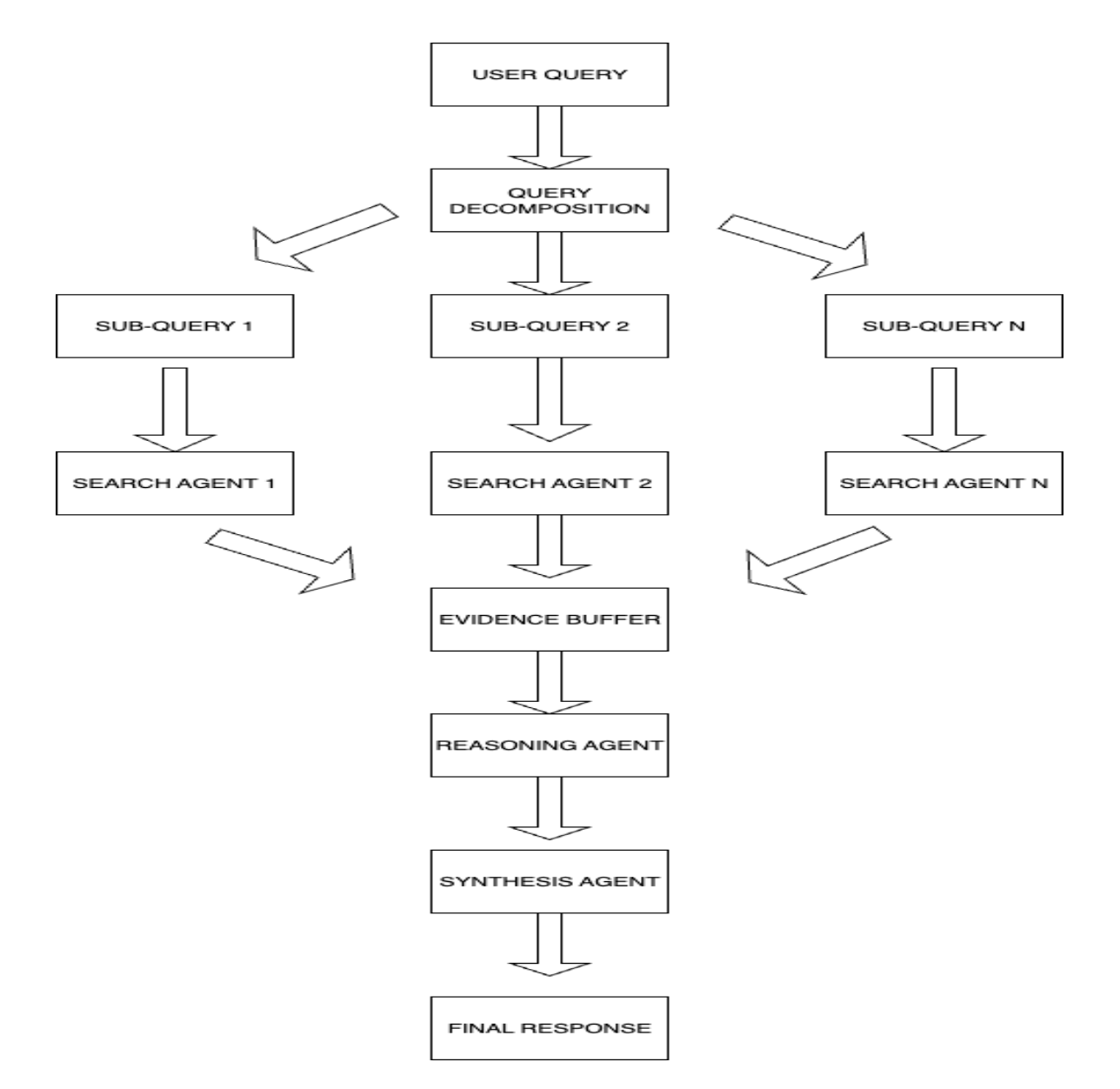

The architecture of Agentic Deep Research Systems constitutes a different breed of searching systems. In contrast to a mere crawl-index-rank chain of processes, beauty in complexity unfolds into multi-agent working processes resembling human research procedures.

This is where it all starts. A complex query or a research question is submitted by the user. Instead of blindly searching for keywords, an agent called the reasoning agent becomes activated to analyze the actual intent and scope of the query. This agent would look at the question, analyze key concepts, possible sub-questions, and the kind of information required to answer the query in a complete manner.

Following this phase is query decomposition; that is, breaking down more complex questions into smaller and easier sub-queries. For instance, if asked “How do climate change policies in Nordic countries compare to those in developing nations?,” the question would be broken down into several discrete research paths, such as finding out about climate policies of Nordic countries, researching approaches of developing nations, and then comparing both frameworks.

Multiple search agents are then launched under the design principles of this system to parallelly work toward gathering information from a broad range of sources. The agents do not exactly just search for keywords; they apply high-grade reasoning techniques such as multi-hop reasoning, that is, following relational information chaining across multiple documents and sources. The “agentic” nature implies that the system can autonomously decide what to search for, how to refine queries based on intermediate findings, and when to change direction on a research trail. At a core level, Agentic Deep Research brings together advanced LLMs' reasoning capabilities with agent-based systems acting autonomously to perform multi-step research tasks.

Advantages of Agentic Deep Research

Comprehensive Understanding: This is regarded as the chief advantage given that complex topics can be comprehensively understood. Rather than having the user sift through scores of search results on their own, the systems identify the main themes, combine opposing opinions, and provide a somewhat nuanced analysis that would typically require an exorbitant amount of human expertise. Customer agent systems strengthen the accuracy of the methods and guarantee that the detriment of misinformation and hallucination cannot occur, which normally plagues traditional LLM systems.

Adaptive Intelligence: These systems can adapt their research strategies to meet the requirements of the query. Simple factual questions should be answered promptly in targeted searches; on the other hand, complex analytical ones have deeper investigations with multi-step reasoning and wide consultation of sources.

Scalable Expertise: Agentic Deep Research has democratized research capabilities that were previously only available to trained researchers and analysts. By automating research processes, these systems allow anyone to do in-depth professional-level research on complex topics.

Time-Efficient: Agentic Deep Research will do in a few minutes what might well take a human researcher hours or days to accomplish. This enormous scale of efficiency can be a game-changer for disciplines that include journalism, market research, academic research, and business intelligence.

Challenges and Limitations

Computational Costs: One main challenge to overcome is the huge computational power required. The test-time scaling being effective is still quite expensive at scale. Running multiple agents in parallel requiring deep reasoning for any task consumes a ton of computational resources and operational costs far more than any traditional search engine.

Quality Control and Verification: Even though such systems implement numerous verification methods, guaranteeing the accuracy and reliability of research results, however, still proves quite difficult. The level of complexity that arises has made it almost impossible to track how conclusions are actually reached, while the systems in practice remain at risk to the biases that exist in their training data or source material.

Latency vs Depth Trade-offs: Tensions exist within the interface between thorough research results and reasonably timely responses. On one side, users demand comprehensive analysis; on the other, users expect relevant information back in time. Balancing between these two contradicting demands asks for some very sophisticated resource allocation strategies along with user experience design.

Ethics and Legal Issues: The ability of these systems to access and synthesize vast amounts of information raises serious questions concerning intellectual property, fair use, and attribution. Ensuring such systems are capable of respecting copyright laws and affixing proper attribution is becoming increasingly complex as their capability increases.

Current Implementations and Future Outlook

Leading technology companies have already implemented Agentic Deep Research systems. Deep Research by OpenAI, supported by a customized version of the o3 model, has shown extraordinary prowess on tough benchmarks. On the benchmark called "Humanity's Last Exam," it attained 26.6% accuracy in terms of expert-level questions across many academic fields and thus greatly exceeded the capacities of traditional models.

The Agentic Deep Research has also gone fully open source, with quite a few projects attaining critical mass. DeepSearcher and Open Deep Research obtained thousands of stars on GitHub only weeks after their release, while research agents built upon frameworks like LangChain and AutoGen are democratizing this capability for small organizations.

Conclusion

Agentic Deep Research implies a paradigm shift on information discovery, promising the democratization of access, analysis, and synthesis of knowledge. By linking the reasoning mechanisms of large LLMs with autonomous agent-based systems, we provide these technologies with perhaps the most intrinsic research and analysis capabilities.

The challenges, however, remain—computational costs, quality assurance, and ethics—and yet the potential upside is huge. These systems, however, go along with time and keep building upon their own power, and I would posit that perhaps by the next decade such systems would evolve to hold as fundamental a place in information discovery as the current search engines.

A shift from conventional search patterns towards Agentic Deep Research is more than just a technological veneer; it is an entirely different conceptualization of how humans relate to information. The dawn of this epoch makes the future of information discovery promising to be intelligent, exhaustive, and universally accessible.

The days of simply "looking" for something are slowly winding down. The future is now for intelligent research.